Roland Project LYDIA Phase 2: The AI Pedal That Makes Your Guitar Sound Like a Trumpet – in Realtime!

Roland Future Design Lab and Neutone Debut a New Approach to Neural Sampling at Superbooth 2026

Roland Future Design Lab and Tokyo-based AI company Neutone are presenting Project LYDIA Phase 2 at Superbooth 2026, an updated version of their pedal built around what they call neural sampling. It sounds more complicated than it actually is, and the idea behind it is genuinely fascinating. Let’s take a closer look.

Roland Project LYDIA Phase 2: Everything You Need to Know About the AI Pedal with Neural Sampling

What Is Project LYDIA?

The Roland Future Design Lab, or RFDL for short, is a research division of Roland Corporation focused on the future of musical instruments and music production. Not your standard product development team, but a unit that’s deliberately thinking well ahead. Project LYDIA Phase 2 is developed in collaboration with Neutone, a Tokyo-based AI company specializing in neural audio technology.

The shared goal: develop a tool that analyzes sounds, creates AI models from them, and then transfers the sonic character of those models onto other audio signals in real time. Anyone thinking of convolution, resynthesis, spectral processing, or the concept of a vocoder is in the right neighborhood, but not quite there. RFDL and Neutone describe this as a fundamentally new approach based on neural networks, and they simply call it “neural sampling.” That actually is something different.

Neural Sampling: What Does That Mean Exactly?

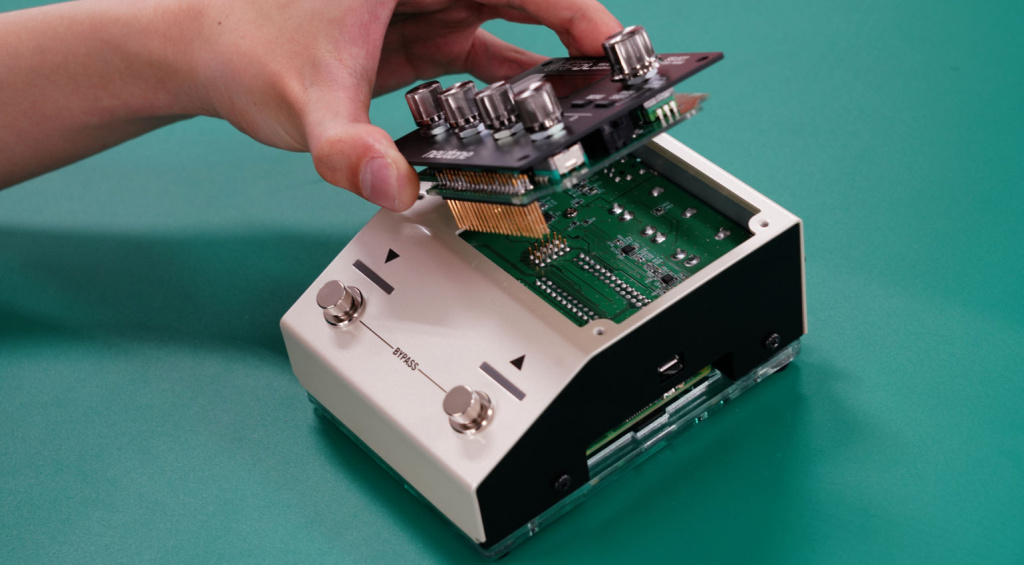

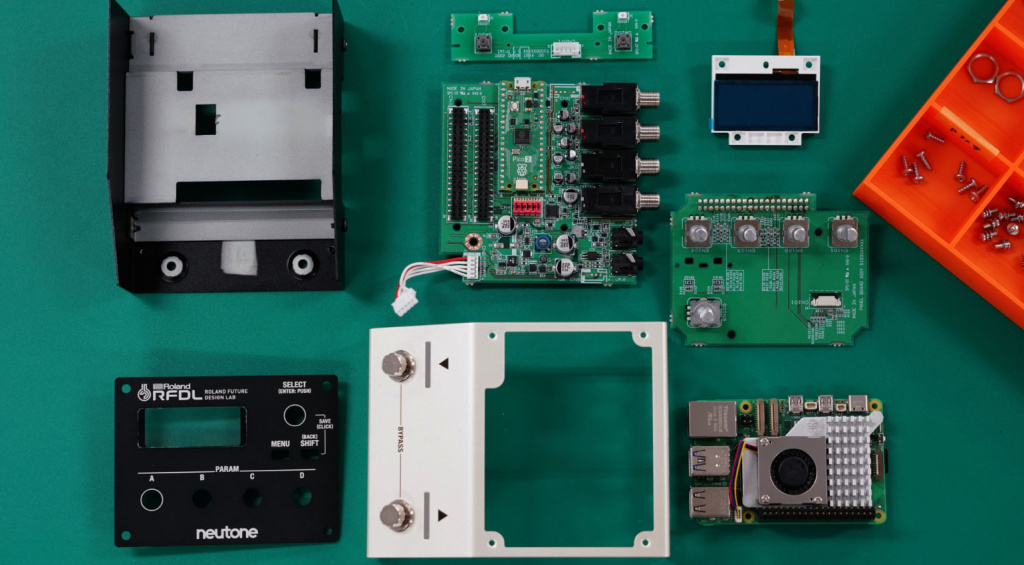

Neutone has been working with this technology for some time already and offers Morpho, a plugin that makes the technology accessible in software form. Project LYDIA Phase 2 essentially puts Morpho into a hardware pedal. The new version runs on a Raspberry Pi 5 and has its own inputs and outputs, so no additional audio interface is required. Very handy.

Compared to the first version, the biggest improvement is MIDI integration: the pedal now integrates much more cleanly into existing setups, with DAW automation and external MIDI controller support both on the table.

What’s Sonically Possible?

Theoretically, the limits are minimal. You could train the AI model on the sound of a trumpet, a violin, a power drill, or even birdsong, and then transfer that character in real time onto a guitar, synthesizer, drum machine, or your own voice. The video shows what that sounded like with the first version of the pedal.

Roland is fully aware that plenty of musicians get nervous the moment AI enters the conversation. That’s why the team is clear about the intent: Project LYDIA is not meant to replace musical skill but to serve as a creative extension for musicians. The familiar pedal format is a deliberate choice to make the technology feel immediately tactile and inviting to experiment with. That’s a smart approach.

“During initial demos with professional audio developers, and through the overwhelming response from musicians worldwide, it became clear that Project LYDIA resonates deeply,” says Paul McCabe, head of the Roland Future Design Lab in Los Angeles.

When Is Project LYDIA Coming?

No release date or price has been announced yet. The current model is a prototype and can be seen at Superbooth 2026 at booths B023 and B026. In June, the pedal will also be on show at the Audio Developers Conference Tokyo. Whether a finished product follows this year is still an open question. We’ll be keeping a close eye on this one!

Verdict

Project LYDIA Phase 2 is one of the most genuinely interesting concepts at Superbooth 2026, precisely because it takes a route that most hardware developers aren’t even looking at. No amp simulations, no conventional effects processing, just a fundamentally different way of connecting sounds. Whether neural sampling makes it into a finished consumer product remains to be seen, but the prototype makes you want to find out. Very much so.

4,3 / 5,0 |

4,3 / 5,0 |