Midimutant: a system that programs synths by artificial evolution

It can be really boring trying to program new sounds on complicated synthesizers. So why not get a computer to do it for you? By a process of what FoAM are calling artificial evolution, the Midimutant can grow new sounds on hardware synthesizers based on your starting sound.

Midimutant

Developed, apparently, with Aphex Twin, the video below shows the Midimutant generating sounds on a Yamaha TX7. Something no one in their right mind would want to spend time programming.

You are currently viewing a placeholder content from YouTube. To access the actual content, click the button below. Please note that doing so will share data with third-party providers.

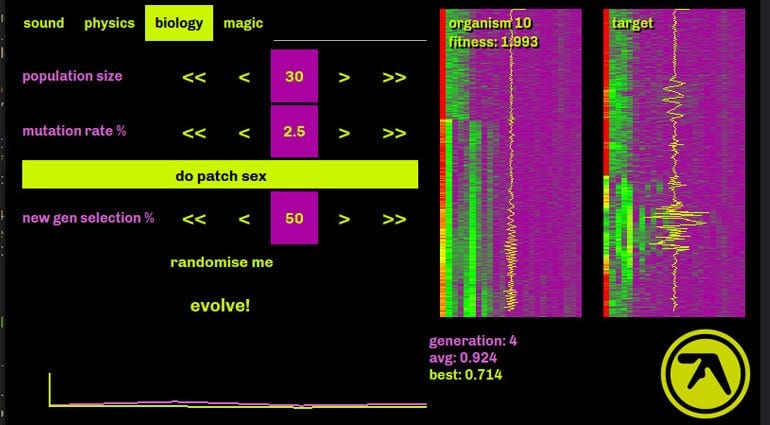

The Midimutant learns by analysing the sysex data and sampled sound data of a range of initially random patches. The audio is checked for similarity using MFCC analysis (Mel-frequency cepstral coefficients – often used in speech analysis). The best patches are then chosen as the basis of the next generation using the sysex patch data as genetic material. FoAM say that unlike a neural network or machine learning algorithms the artifical evolution does not need to model the underlying parameter space. So it doesn’t need to understand the synthesis functions, it just analyses the data. This means it can be used with any synthesizer with a documented sysex dump format.

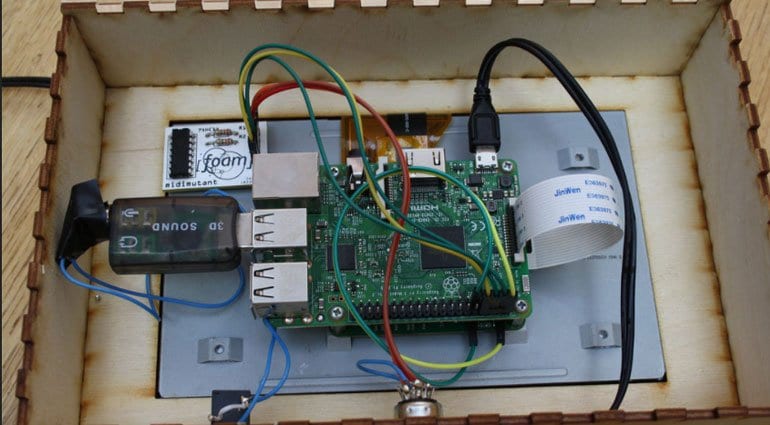

Midimutant runs on a Raspberry Pi and the source code and setup instructions will be made available so that you can build your own.

The three example evolutions are remarkable. It feels a lot like the machine is tweaking parameters and seeing what it likes before moving onto another setting. I guess that comes from the fact that Midimutant is “listening” to the sounds and comparing them with what went before. So it’s not like hitting a “Random” button – the similarity and connection to the sound is a defining factor. You can also put in any sound you like, as demonstrated in example number 3, and just see what comes out the other end.

You are currently viewing a placeholder content from SoundCloud. To access the actual content, click the button below. Please note that doing so will share data with third-party providers.

You are currently viewing a placeholder content from SoundCloud. To access the actual content, click the button below. Please note that doing so will share data with third-party providers.

You are currently viewing a placeholder content from SoundCloud. To access the actual content, click the button below. Please note that doing so will share data with third-party providers.

What a fascinating idea and a great way to breathe new life into old hardware. More information on the FoAM website.