KnittedKeyboard II: The cosiest of MIDI controllers

MIT has been messing around with the fabric of time and space and has knitted itself a multi-dimensional expressive controller that doubles as a scarf.

KnittedKeyboard II

“What happened to version 1?” I hear you ask, well we reported on the FabricKeyboard back in 2017 and I’m pretty sure this is an evolution of that. Anyway, MIT Media Labs is interested in seamless fabric-based interactive surfaces along with wearables, smart objects and responsive environments and the KnittedKeyboard certainly ticks all the right boxes. The project is headed up by Responsive Environments researcher Irmandy Wicaksono.

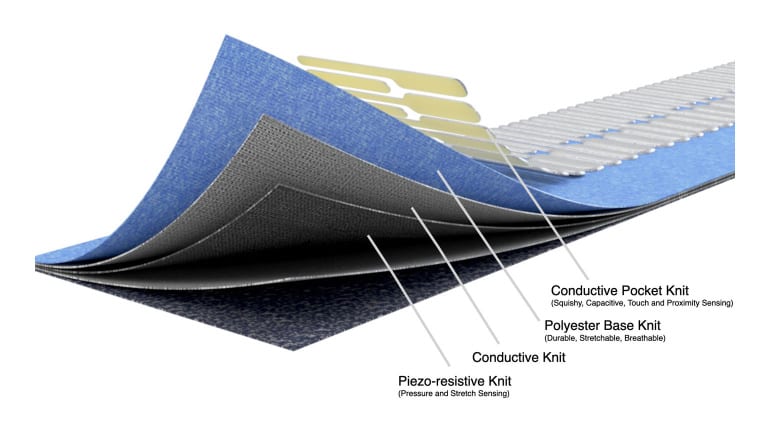

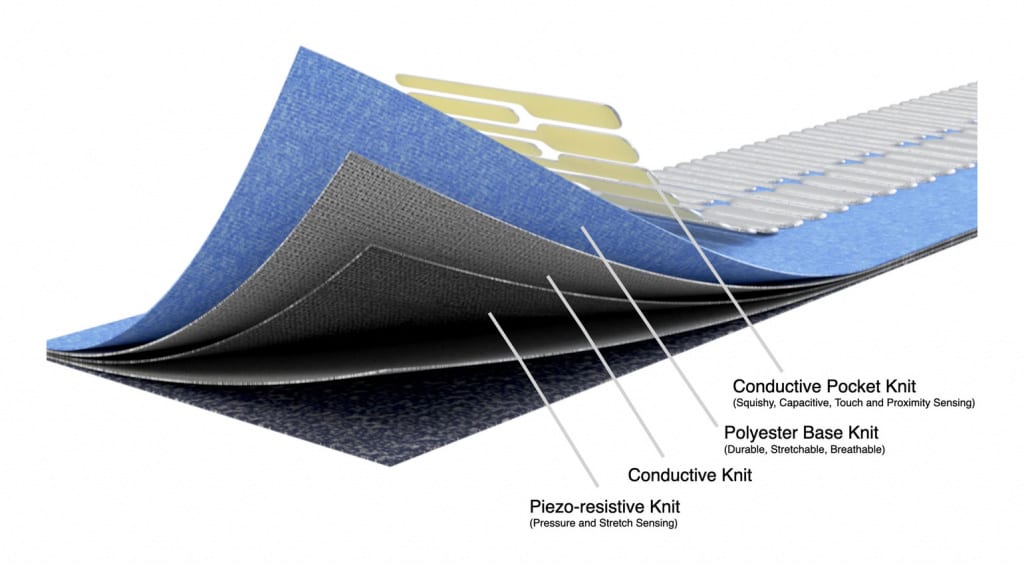

The prototype uses digital knitting technology to combine electrically conductive and thermoplastic fibres with polyester to develop a seamless 5-octave piano-patterned textile that provides MPE MIDI control. Within the layers you have a pressure and stretch sensitive Piezo-resistive knit, you have a Conductive knit, a breathable Polyester knit and a Conductive Pocket knit that forms the squishy, capacitive, touch and proximity sensing keyboard.

Every key acts as an electrode which creates an electromagnetic field that can be disrupted by a hand’s approach. This gives us more than a contact interaction, it can include gestures and moving of hands, fingers in order to generate expressive data. This is something not found in most MPE controllers that are in production today.

You are currently viewing a placeholder content from Vimeo. To access the actual content, click the button below. Please note that doing so will share data with third-party providers.

It’s an arrestingly beautiful idea that turns a functional MIDI controller into something more, something cuddly and intimate that invites conversation and interaction in ways that a ROLI keyboard wouldn’t. It’s quite fascinating especially the non-touch qualities of finding expression on the space around the KnittedKeyboard. I wonder if any of this technology would make it into a workable product?

More information on the KnittedKeyboard II

- MIT Media Research – KnittedKeyboard II page.

One response to “KnittedKeyboard II: The cosiest of MIDI controllers”

But why?