AI Synthesis: The Future Of Synthesizers Is Coming Soon

Where will AI take synthesis?

In this special report on AI synthesis, we try to answer the question: could an AI-powered soft synth someday mimic a Roland Jupiter-8?

AI is everywhere these days, writing your news and helping you mix your songs. Synthesizers powered by AI are few and far between though. All that is about to change. Read on to find out how.

AI Synthesis

Would you be surprised to read that the above sentences were written by an AI? So would I, because they weren’t. But it says a lot about the sudden prevalence of AI in our lives that it could have been true. News stories written by AI like ChatGPT are becoming normal. AI is also infiltrating music, with algorithm-written songs and computer voices masquerading as famous singers. It’s a brAIve new world.

For us music producers who want to make songs using AI, there are plenty of options. From mixing and mastering helpers from iZotope and Sonible to Myxt, a collaborative music workplace, there are plenty of musical ways to leverage artificial intelligence.

One area that hasn’t fully come online yet is AI synthesis—AI actually generating the sound. While still in its nascent stage, it is happening. Let’s take a look at where the technology is now and where it might be going. (Note that what follows is not intended to be an exhaustive list but just a snapshot of the technology currently available.)

Hardware Synthesizers With AI Synthesis

Given the newness of the technology, as well as the processing power required, it’s not a surprise that AI synthesis-capable hardware synthesizers are few and far between. The main instrument of note is the Hartmann Neuron, a digital polyphonic synth from the early 2000s. With technology reportedly based on neural networks, the Neuron allowed users to re-synthesize and process samples that had been converted into digital computer models.

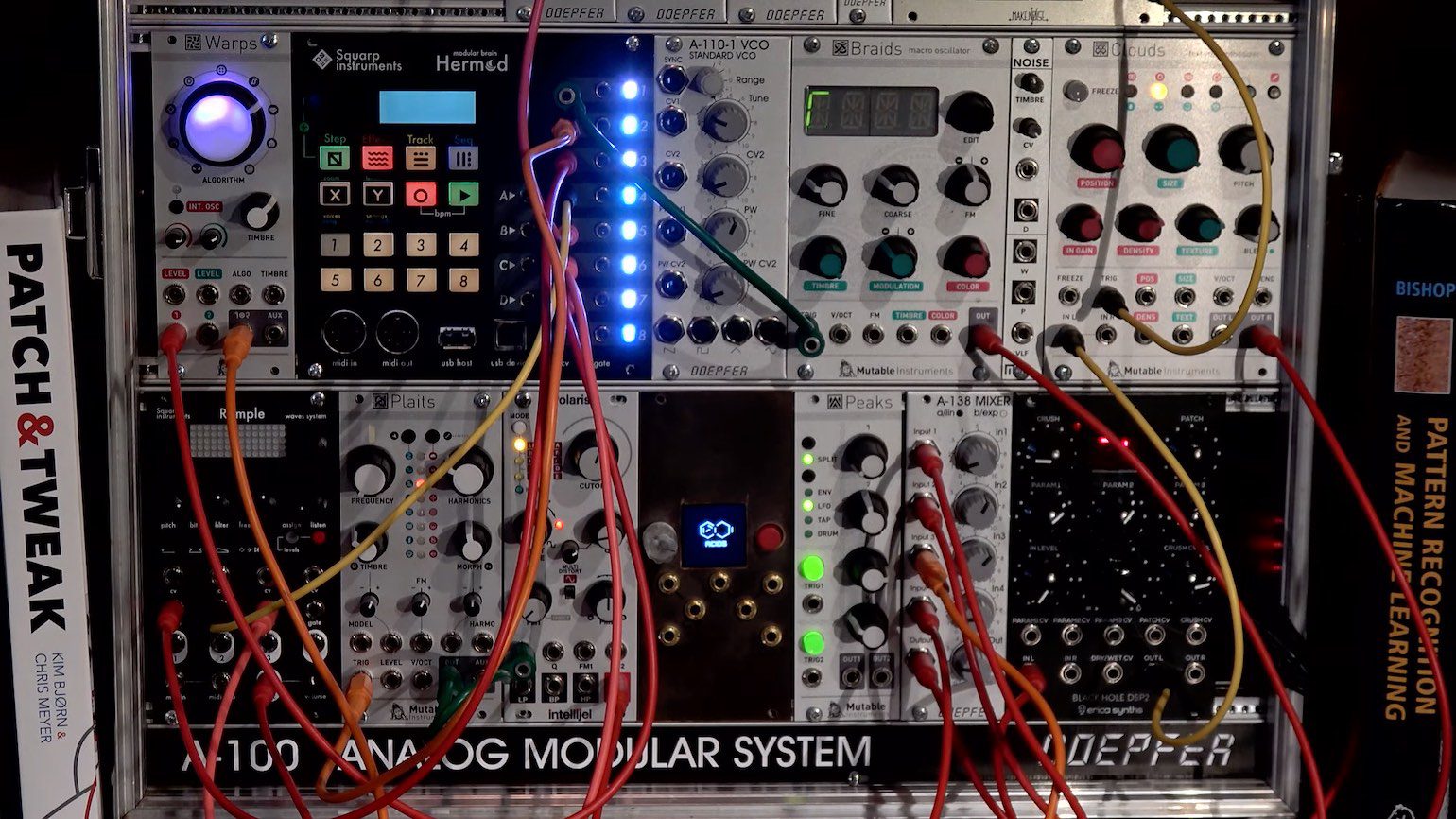

More up-to-date—but still in the prototype stage—is Neurorack, what developer Acids is calling “the first-ever AI-based real-time synthesizer.” The Eurorack synth relies on the Nvidia Jetson Nano, a nanocomputer with 128-core GPUs and four CPUs. Neurorack is capable of generating impact sounds and can be controlled with CV from other modules. This is one to watch.

While not synthesis, per se, a few of Roland’s recent hardware synths such as the Jupiter-X and Juno-X have I-Arpeggio, an AI-powered arpeggiator made in collaboration with Meiji Gakuin University.

Software Synthesizers With AI Synthesis

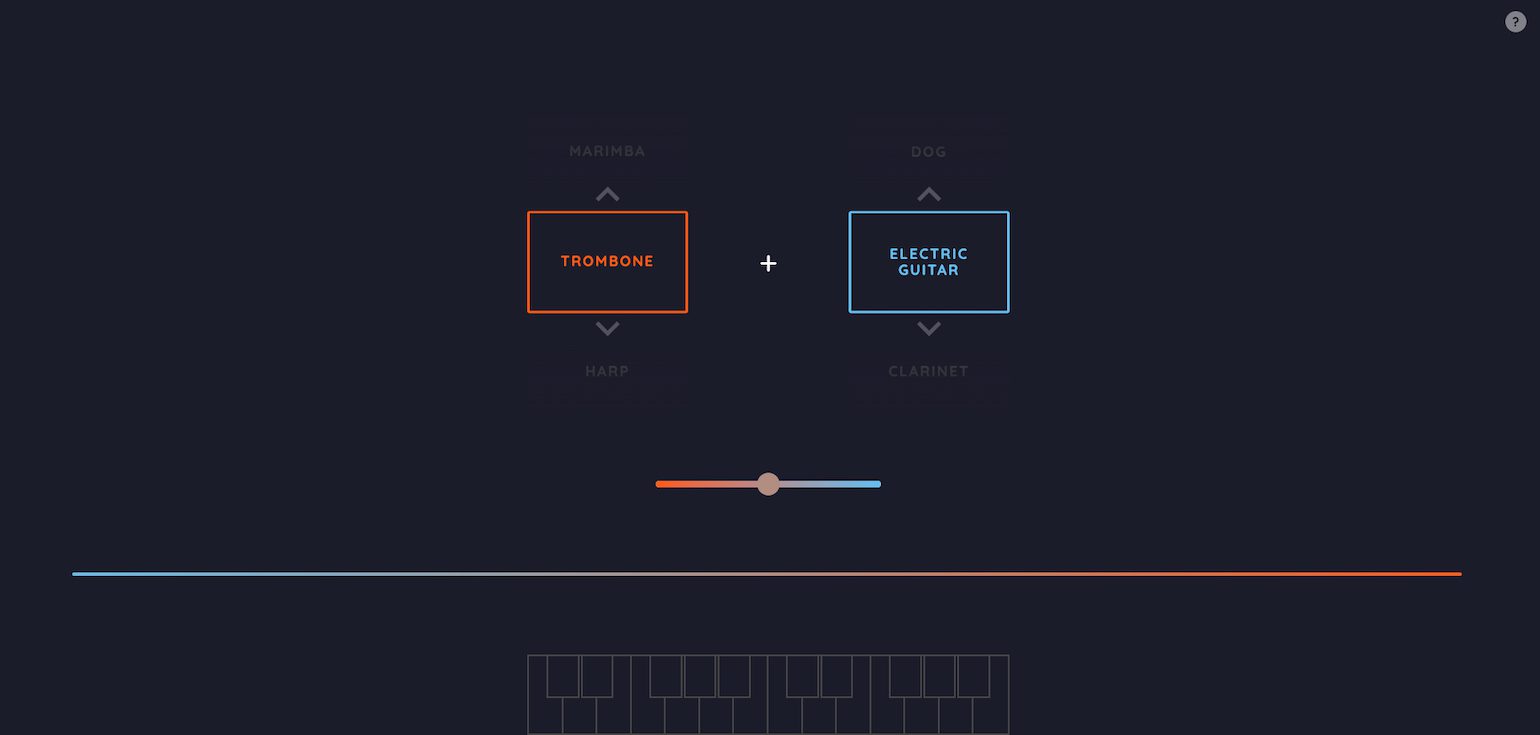

There are some interesting things happening with AI synthesis on the software side as well. NSynth (Neural Audio Synthesis) is a neural network-based application that lets you “interpolate between pairs of instruments to create new sounds” in the words of Magenta, the developer (Google’s Creative Labs was also involved). NSynth is available as a MaxForLive device, as a web instrument called NPlayer, and as NSynth Super, a DIY hardware device.

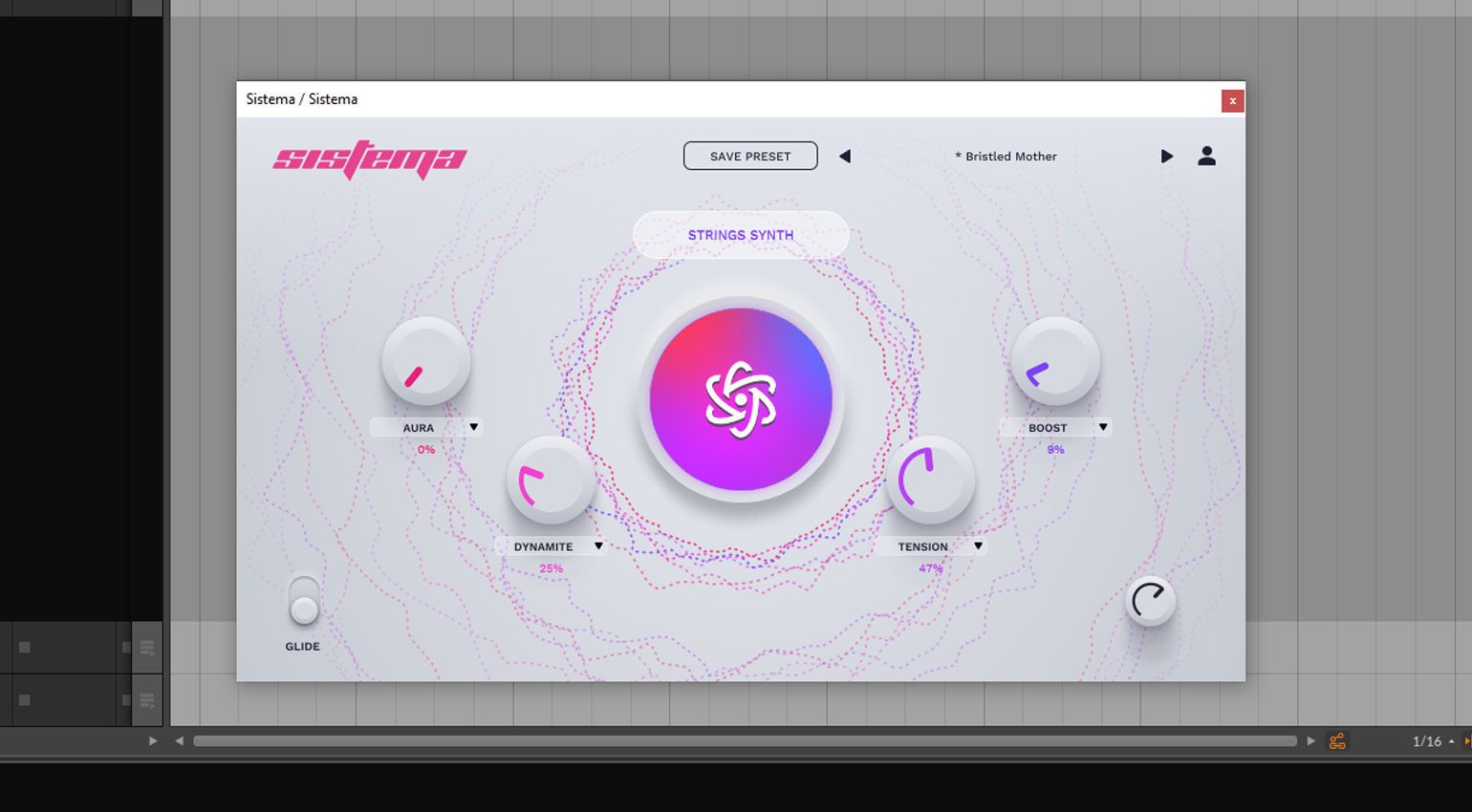

Sistema by guk.AI is an AI-powered plugin that you can use in your DAW. A sound-generating program, it lets you choose the starting point based on type and character, and then the AI generates a new sound for you. You can then further adjust it with a number of macros. The plugin is available to rent or buy and there’s a free option as well.

Emergent Drums is a drum machine, not a synthesizer, but the approach to synthesis is similar. Audiolab’s plugin uses an AI trained on percussion sounds to generate new and unique drum samples. You can alter the sounds with a number of parameters, such as pitch, envelope, and filter, to suit your needs.

The Future Of AI Synthesis

This is very exciting stuff but I can’t help but feel like more could be happening. If AI can sing like the Weekend and Liam Gallagher, couldn’t it also sound like a Jupiter-8 or CS-80? To get some answers, I turned to two experts, Martin Broerse of music plugin developer Martinic and Jessica Powell, CEO and co-founder of AudioShake, which makes AI-powered stem isolation software.

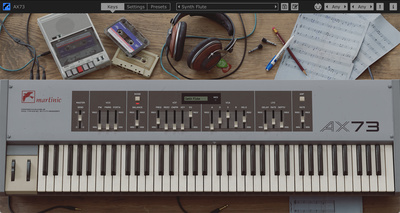

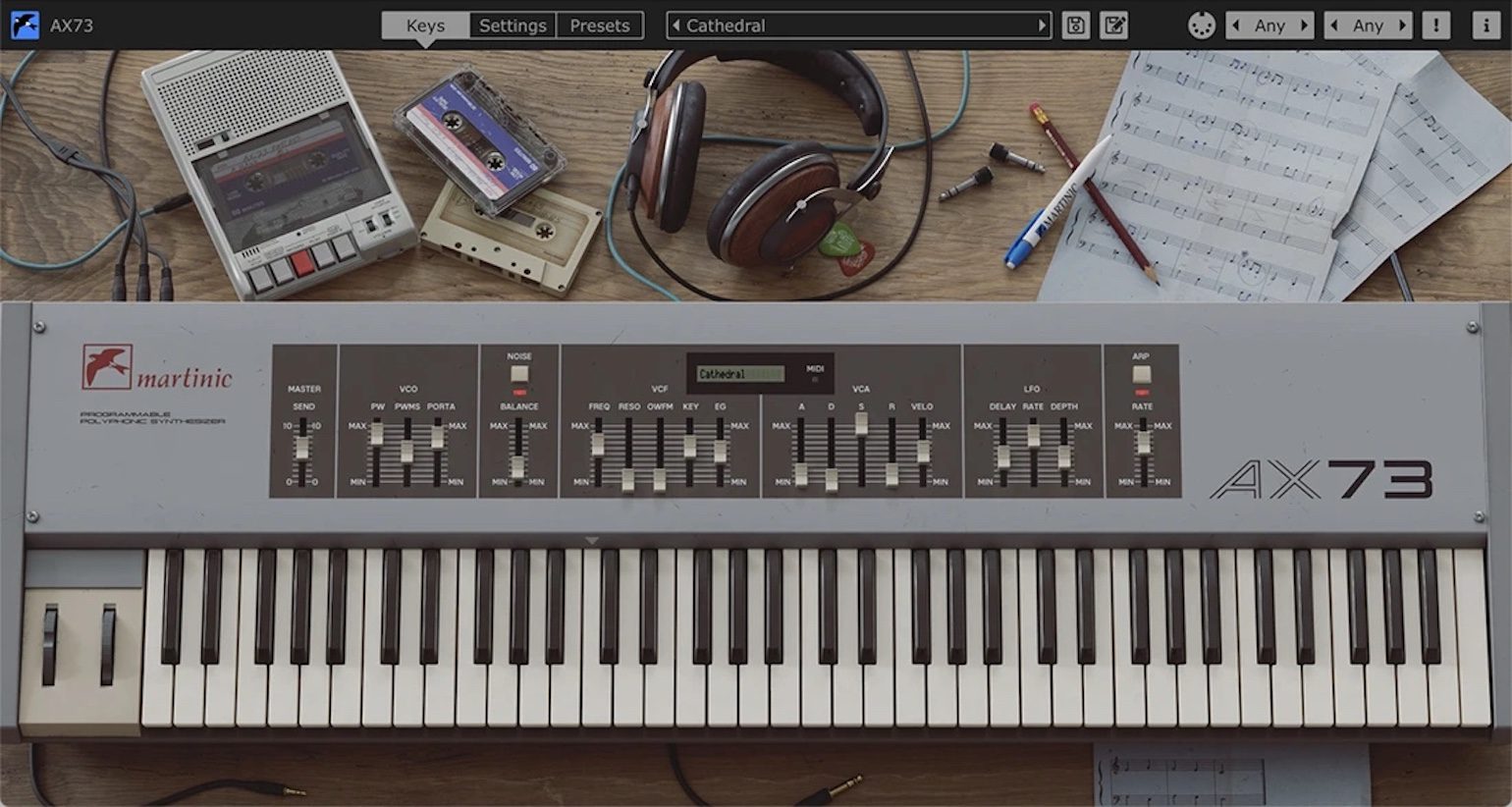

You may know Martinic for its detailed emulations of the Akai AX73. The team also helped YouTuber Doctor Mix realize his freeware ChatGPT-coded Doctor Mix AI Synth. I asked Martin how useful AI is right now for making soft synths. “At the moment AI is still a toddler writing code,” he said. “On the Doctor Mix AI Synth, this resulted in huge volume differences and a static ADSR. But as you can see here you can still make very cool music with it.”

Jessica from AudioShake, when asked the same question, gave an interesting answer: “If we’re talking about full mix generation, generally speaking, the best sounding ‘AI music’ right now is not generative AI. Rather, it’s using licensed stems provided by composers—or stems created by AudioShake on behalf of those composers—to services that use classic music theory and some AI, to combine those stems in new ways and generate new music from that. But fully AI-generated music is getting better and better. And already you can hear good-sounding instrument generation.”

Can AI Synthesis Copy A Jupiter-8?

Now for the million-dollar question. Can you foresee a time when AI could be used to copy the sound of famous synthesizers, sort of like we can train AI to sing like Drake or Liam Gallagher from Oasis today?

“I think AI can copy famous synthesizers,” answered Martin, “but I expect this will not happen in VST/AU/CLAP/AAX plugins but on websites that create complete songs without the use of a DAW. This is because computing time and AI models will be very big and would glitch the audio on current hardware if you (tried to) implement it locally. I think it can already be created with TensorFlow models today.”

Jessica had a similar answer. “Eventually, sure. You can already get a sense of that by putting prompts around specific styles into services like Riffusion. It’s fun to play around with.”

The Main Issue With AI Synthesis Is Computing Power

The main barrier though, as Martin mentioned, is computing power. However, he sees a way forward.

“I think things can change if the computers in the studio and at home get much more powerful,” he said. “At the moment we still have to write very optimized C++ code to not use too much memory and CPU power to make emulations work without glitching the system. At the moment that would be impossible on current hardware with an AI Synth. In the future website encryption is expected to be crackable in a few hours by … supercomputers.

Because this milestone on the horizon will make … current encryption impossible it is expected that when this happens, to run banking software local computers will need to be replaced by ones 100 times more powerful to make new secure encryption for banking possible. So you could say that hackers … will make it possible in the future to create emulation VST/AU/CLAP/AAX plugins based on AI models that will be just as good as our current way of modelling instruments.”

AI models that will be just as good as current modelling plugins. I can’t wait.

More about AI synthesis:

- All about synthesizers

- All about artificial intelligence in plugins

Videos:

You are currently viewing a placeholder content from YouTube. To access the actual content, click the button below. Please note that doing so will share data with third-party providers.

You are currently viewing a placeholder content from YouTube. To access the actual content, click the button below. Please note that doing so will share data with third-party providers.

You are currently viewing a placeholder content from YouTube. To access the actual content, click the button below. Please note that doing so will share data with third-party providers.

You are currently viewing a placeholder content from YouTube. To access the actual content, click the button below. Please note that doing so will share data with third-party providers.

You are currently viewing a placeholder content from YouTube. To access the actual content, click the button below. Please note that doing so will share data with third-party providers.

You are currently viewing a placeholder content from YouTube. To access the actual content, click the button below. Please note that doing so will share data with third-party providers.

You are currently viewing a placeholder content from YouTube. To access the actual content, click the button below. Please note that doing so will share data with third-party providers.

You are currently viewing a placeholder content from YouTube. To access the actual content, click the button below. Please note that doing so will share data with third-party providers.

*Note: This article contains promotional links that help us fund our site. Don’t worry: the price for you always stays the same! If you buy something through these links, we will receive a small commission. Thank you for your support!

3 responses to “AI Synthesis: The Future Of Synthesizers Is Coming Soon”

4,4 / 5,0 |

4,4 / 5,0 |

I wish we all would stop calling this current tech AI, though I guess there isn’t a better one and the press likes catchy. Artificial yes. Intelligent? I’m not so sure.

yeah deep learning and neural networks are not actually “artificial intelligence”; and specially they shouldn’t be seen as a replacement for anything creative human produce (maybe tedious and repetitive tasks; just like automation). they can and could be useful tools though; and that’s how they should be approached. Also they’re good at producing artificially made horror beyond our comprehension and I’m here for it.

So not too far off in the future then, when your tech-denying guitarist mate says “Pah! You keyboard players just press a button and the computer does it all for you!”….they might actually have a point 😂